Introducing the Mendix ML Kit for Low-Code ML Deployment

Low-code application platforms (LCAPs) are an emerging market, with an expected growth of around 30% in the next few years. Almost at the same time as the rise of the LCAP market in the last decade, a new spring of Artificial Intelligence (AI) has started, powered by the ongoing increase of computing power combined with the availability of big data.

While LCAPs are revolutionizing “how” applications are built, AI and Machine Learning (ML) are revolutionizing “what” kind of applications can be built. These two trends are coming together as LCAPs mature and are adopted by enterprises to build complex mission-critical apps. Therefore, the next generation LCAP should support building the so-called “AI-Enhanced Business Apps.”

AI-Enhanced Business Apps— also known as Smart Apps—are the apps that use an AI/ML model (often in its logic) to provide an automated, smarter, and contextualized user experience.

In 2020, Gartner predicted that “60% of organizations will utilize packaged artificial intelligence to automate processes in multiple functional areas by 2022.” This is already happening. A McKinsey survey in 2021 of 1,843 participants representing the full range of regions and industries showed that “56% of surveyed companies have adopted AI in at least one business function (up from 50% in 2020).”

Smart apps use cases

AI-Enhanced Business Apps make use of patterns in the data to make predictions instead of having them explicitly programmed. This often results in the automation of manual work or a more intelligent approach to a task or business process. Hence, it increases workplace efficiency, reduces costs and risks, and enhances customer satisfaction. Below are some examples AI and ML use cases.

Sentiment Analysis and Classification

- Understand the sentiment of customer feedback (positive vs negative)

- Categorize customer feedback or requests into specific support or business categories

Object detection

- Detect defective products in a factory product line

- Detect the defect type in the products in a factory product line

- Classify images for medical images

- Object counting in a factory

Anomaly Detection

- Detect suspicious bank transactions

- Abnormal relation between business metrics (e.g., an increase in product sales but a drop in revenue due to the wrong price tag)

- Anomaly Detection in the inventory

Recommendations

- Recommending the best offer to the insurance agents based on customer needs

- Recommending alternative products or services to online shoppers based on the previously bought products

Forecasting

- Forecast cash flow based on the historical and seasonal trends

- Forecast the required inventory based on the sales trends

- Demand forecast for a hotel chain using internal and external factors

- Dynamic pricing based on the demand forecasting

The challenges of ML deployment

The AI/ML landscape and tooling have been extensively advanced in recent years, but deploying AI in production and integrating AI into applications is a key challenge.

Based on Gartner’s research, “Organizations report that one of the most significant obstacles they face in pursuing AI is integration to existing applications.” Gartner cites the same challenge a year later: “More than half of successful AI pilots never make it to the deployment stage.”

So far, Mendix customers have been able to integrate AI services with Mendix apps using REST APIs. This is a viable approach (especially for third-party AI services), however locally built or Open Source ML models require access to a hosting service and having technical knowledge to deploy the model, spend extra effort, and incur extra hosting costs and maintenance.

But often customers need to embed their ML models into their Mendix app for various reasons, such as performance, privacy, and cost. The Mendix ML Kit gives customers the tooling to deploy those AI models in a Mendix app in an easy and low-code way.

Low-code deployment with the Mendix ML Kit

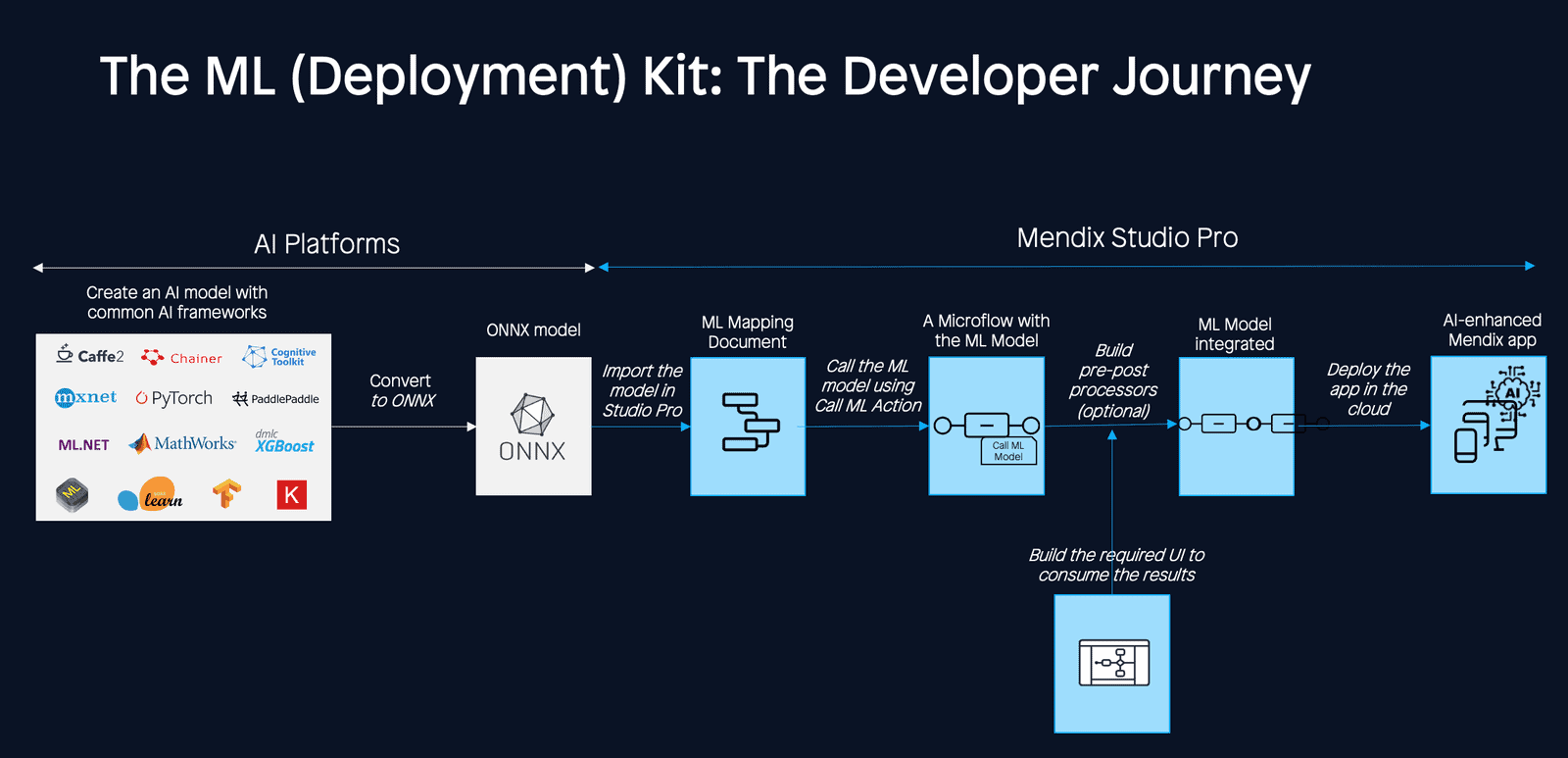

The Mendix ML Toolkit allows developers to deploy an ML model—built using a common ML framework and language—into the Mendix Studio Pro runtime in a low-code way.

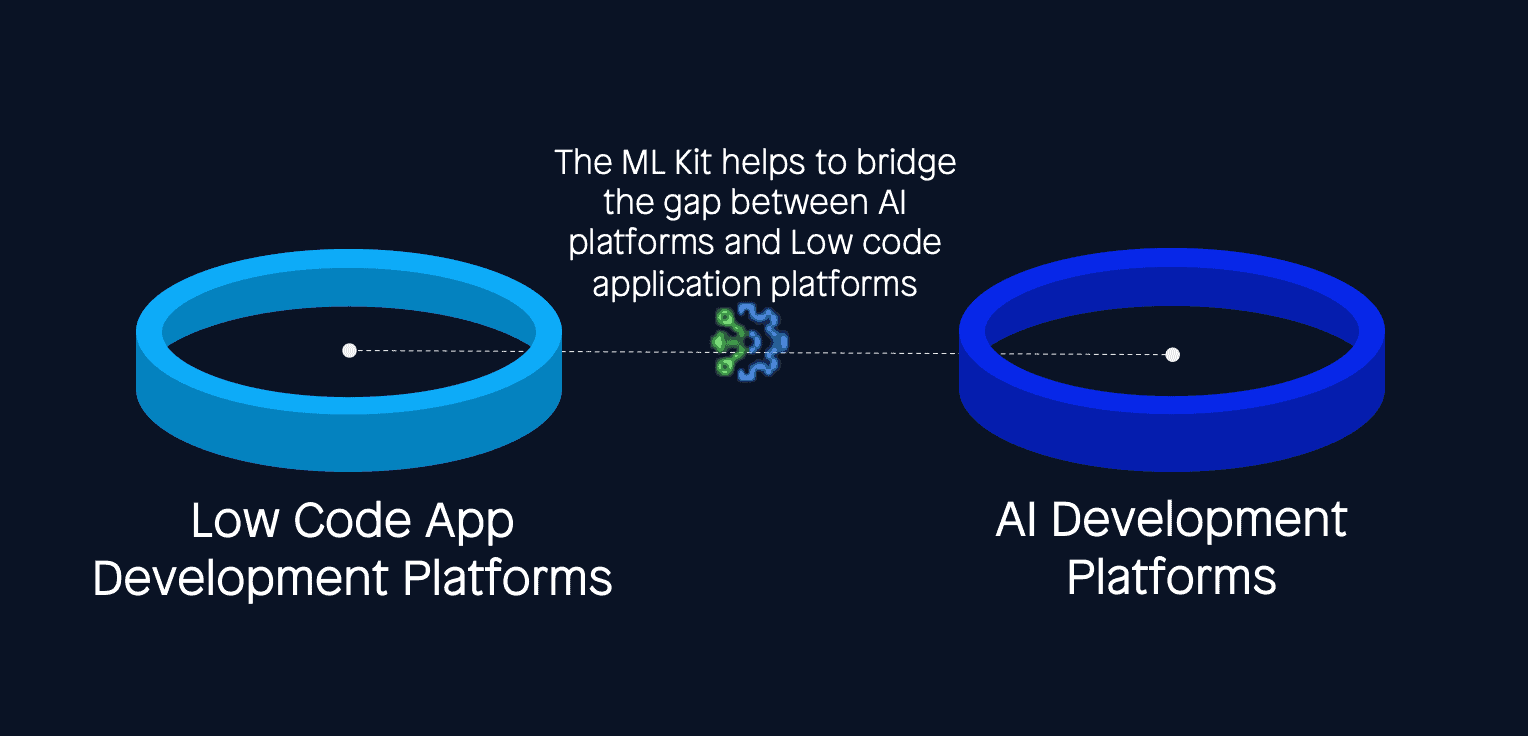

The ML Kit is based on the Open Neural Network Exchange (ONNX), an open-source framework co-developed by Microsoft and Facebook in 2017 to enable framework interoperability. The ONNX enables us to bridge the gap between AI frameworks (e.g., Python) and Mendix (e.g., JVM). This means that you can train an ML model in your favorite ML framework, such as TensorFlow, then convert it into ONNX format and consume it in a different framework like Mendix. The ONNX bridges the gap between AI frameworks (e.g., python) and Mendix (e.g., JVM).

Benefits of the Mendix ML Kit

The Mendix ML Kit provides various advantages:

- Faster time-to-market by decreasing the time to ML deployment from weeks to days/hours.

- Easier integration by bridging the two worlds—AI Platforms and LCAPs—and enabling teams to integrate ML models built using third-party AI platforms into apps built with Mendix.

- Superior performance, thanks to lower latency (network and model inference latency) by integrating the model into the app runtime and running on JVM.

- Lower effort and cost with no need to acquire, deploy, or maintain other hosting services for the model deployment (vs. microservices integration).

- On-edge deployment (future) provides the possibility to run on-edge (on-device ML) due to runtime integration. It is noteworthy that 60% of the image processing models are now deployed on edge.

Out-of-the-box pre-trained ML models

The ONNX community provides an ML model repository, called ONNX Model Zoo, where common computer vision and language models can be found. The ONNX Model Zoo is a collection of pre-trained, state-of-the-art models in the ONNX format contributed by community members.

Accompanying each model are Jupyter notebooks for model training and running inference with the trained model. The notebooks are written in Python and include links to the training dataset, as well as references to the original paper that describes the model architecture.

All the ONNX models in the ONNX Zoo should be compatible with the ML Kit. Customers can pick any ML model from this repository, customize it with their own data for their use case, and integrate it into their Mendix app using the ML Kit.

Below, you can find the list of ONNX models in the ONNX Model Zoo. You can use these pre-trained models to build your business use cases similar to the ones listed above, or other types of use cases for your business.

Vision

- Image Classification

- Object Detection & Image Segmentation

- Body, Face & Gesture Analysis

- Image Manipulation

Language

Other

Advanced deployment patterns with the Mendix ML Kit

The Mendix ML Kit combined with the Mendix Platform enables various state-of-the-art ML implementation patterns.

Sometimes multiple ML models are used to predict an output, where the same data points are sent to a group of models and then all the predictions are collected to find the best prediction (ensemble learning). Or, several models can also be used in a cascaded way to feed the output of one model into another (cascaded inference). These deployment patterns can be easily achieved using Mendix Microflows and the Mendix ML Kit.

In addition, integrating an ML model using as a service is also possible only using the Mendix Platform and the ML Kit without any need for additional third-party hosting services. Last but not least, batch inference where multiple inferences are run with a single request for the model is supported with Mendix and the Mendix ML Kit.

You can read how to implement all these deployment patterns using the ML Kit and Mendix Studio Pro on the following pages:

Summary

With the ML Kit, we aim to empower customers to use AI in their apps to further improve business processes, automate manual tasks, and deliver customer satisfaction. If you have not used the Mendix ML Kit yet, give it a try. And let us know what you think — your feedback is often the basis of our next iteration

To learn more about the Mendix ML Kit and how to use it, visit Mendix Docs. Or, find examples of ML deployment using the Mendix ML KIT accompanied by their ML models in Jupyter notebook here.