How to Build Apps Without Code: 3 Steps to Build an OCR Mobile App

Everyone is talking about the latest and greatest in AI tech. Rightfully so, these technologies have the potential to solve many real-world problems. The challenge is that you need to be a superhuman coding machine to do anything with this stuff, leaving most of us waiting on the sidelines.

But, the times, they are a changing. Low-code development now makes it possible to build apps without code. Using visual development practices, automation, and abstraction, people with little-to-no development experience can create highly customized applications for nearly any purpose.

Here’s how I used low-code to develop a mobile app that uses machine learning algorithms to read handwritten notes — without writing a single line of code.

1. Start with a low-code template

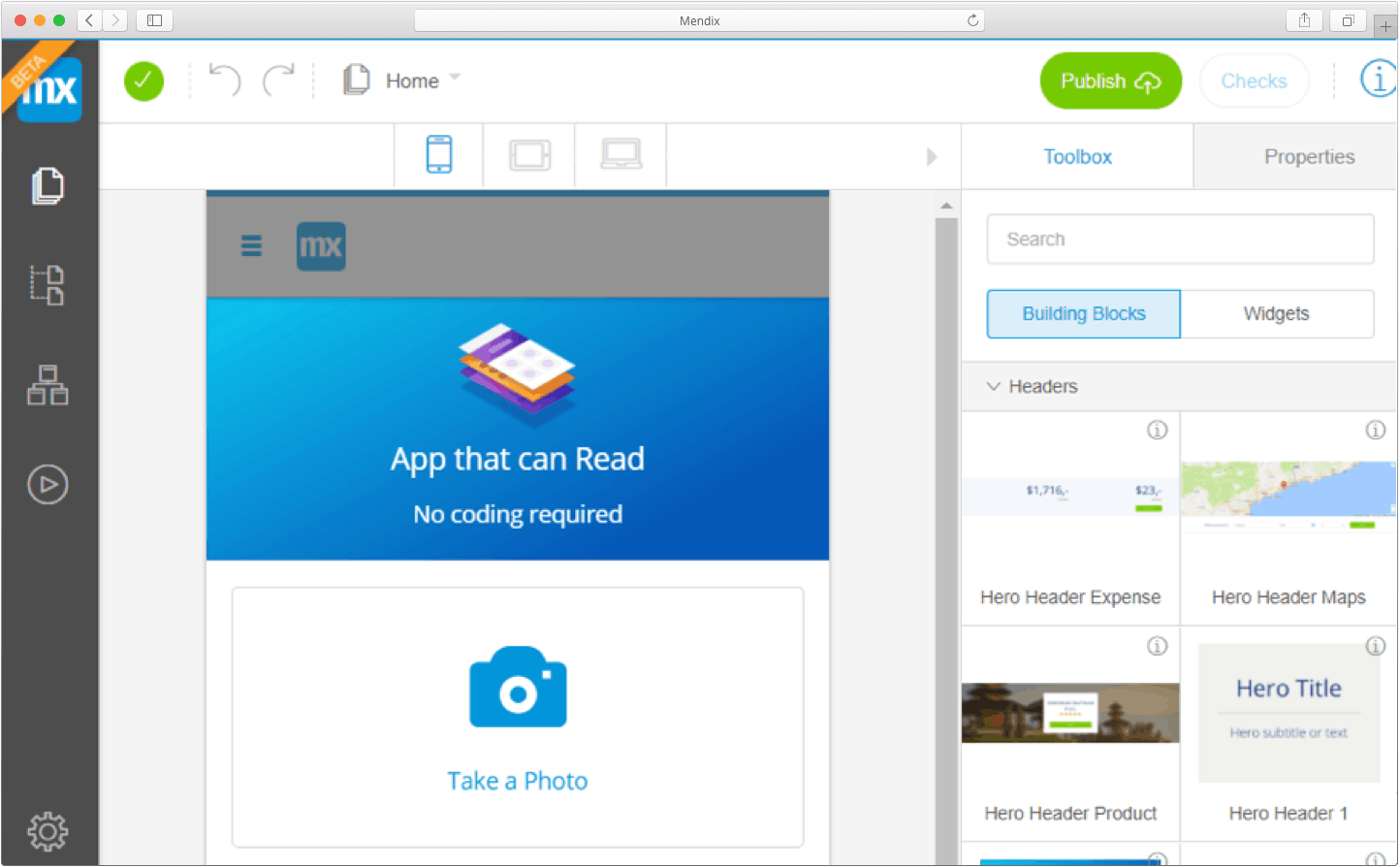

For a smartphone to do anything, it has to be through an app. Personally, I don’t know how to code mobile apps because I’m not a programmer. Luckily, I know about enterprise low-code application development platforms like Mendix. Low-code platforms allow you to create applications visually instead of through complex programming languages, making it easier and faster to develop because you focus on thinking rather than typing.

I started with a simple homepage from a set of page templates. Then I added a button with a camera icon by dragging and dropping some UI elements onto the page.

What I want is to press the camera icon and open my camera app to take a picture, so I added the Mendix PhoneGap camera widget to a data view on the page. When I take a picture, it is stored in the app’s database auto-magically. I created a second page on my phone to display a list of all the pictures in my app’s database.

2. Use an OCR algorithm

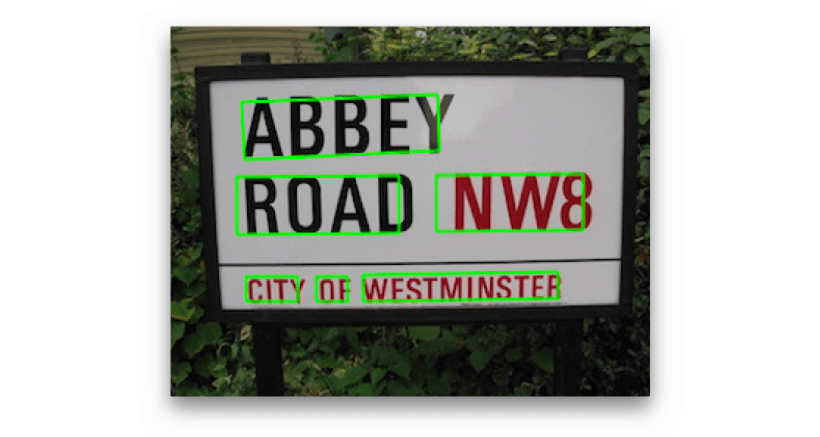

The method for identifying text on pictures is called optical character recognition, or OCR for short. OCR algorithms have been around for a while and thanks to advances in machine learning, they are getting better every day.

Creating your own OCR algorithm is very hard. You need advanced data science skills and very large datasets to have any shot at creating a decent OCR algorithm. Good news! Big tech companies with large data science teams and massive amounts of data have created great OCR algorithms for you to use. Google Cloud has a great OCR API and offers a generous free trial.

APIs are built for developers that understand code and JSON messages in a way that, frankly, I don’t. Luckily someone created and shared a re-usable Mendix component that consumes the Google Cloud Vision API. I downloaded the module, entered the API key Google generated for me, and I was ready to go. I added a button that sends my picture to Google and shows the characters the Google Cloud Vision service extracted from my picture in a simple popup.

Related reading: How to Rapidly Build Apps Without Coding

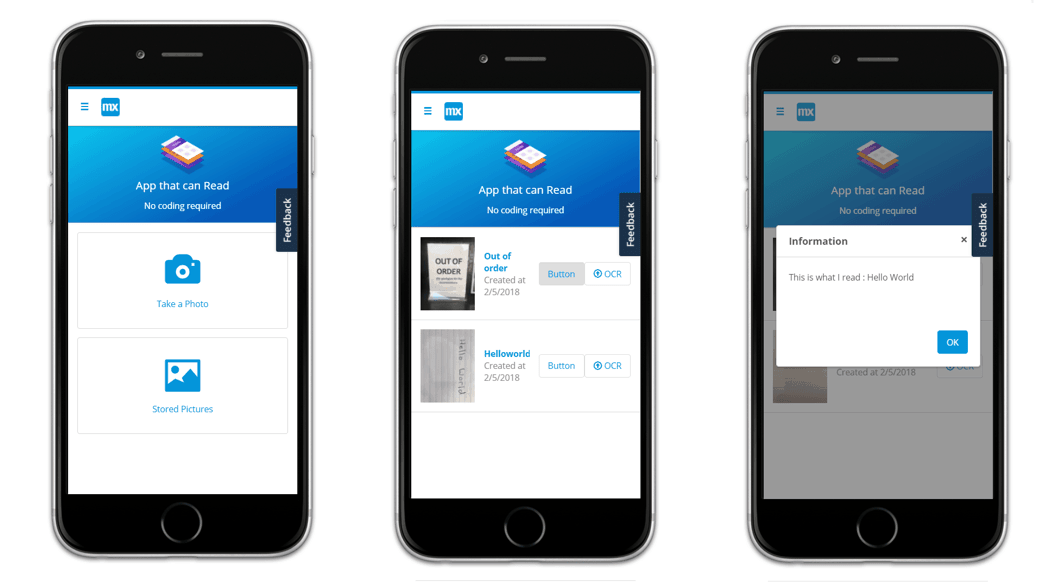

3. Deploy and start using the app

I deployed my app to the free Mendix Cloud and used the Mendix mobile app to view my app on my iPhone. I took a picture of a handwritten note with ‘Hello World’ on it, stored it in my app’s database, and after pressing the OCR button I received a popup saying, ‘Hello World’.

Getting a mobile app to understand ‘Hello World’ from a piece of paper isn’t very useful. But the same technology can be applied to digitize any handwritten text. From meeting notes to annoying tax forms, the possibilities are endless.

The degree to which complexity in technology is abstracted away in today’s software development platforms and APIs is simply amazing. The fact that someone like me — a business major with minimal development abilities — casually built an app without coding in just an hour is incredible.

Give it a shot — you can do it!

This blog post was originally published on February 21, 2018, and has been updated to include the most current information.